Mathematics for Applied Sciences (Osnabrück 2023-2024)/Part I/Lecture 26

- Rank of matrices

Let be a field, and let denote an -matrix over . Then the dimension of the linear subspace of , generated by the columns, is called the column rank of the matrix, written

Let denote a field, and let and denote -vector spaces of dimensions and . Let

be a linear mapping, which is described by the matrix , with respect to bases of the spaces. Then

Proof

To formulate the next statement, we introduce row rank of an -matrix to be the dimension of the linear subspace of generated by the rows.

Let be a field, and let denote an -matrix over . Then the column rank coincides with the row rank. If is transformed with elementary row manipulations to a matrix in the sense of Theorem 21.9 ,

then the rank equals the number of relevant rows of .Let denote the number of the relevant rows in the matrix in echelon form, gained by elementary row manipulations. We have to show that this number is the column rank, and the row rank of and of . In an elementary row manipulation, the linear subspace generated by the rows is not changed, therefore the row rank is not changed. So the row rank of equals the row rank of . This matrix has row rank , since the first rows are linearly independent, and beside this, there are only zero rows. But has also column rank , since the columns, where there is a new step, are linearly independent, and the other columns are linear combinations of these columns. By Exercise 26.2 , the column rank is preserved by elementary row manipulations.

Both ranks coincide, so we only talk about the rank of a matrix.

Let be a field, and let denote an -matrix

over . Then the following statements are equivalent.- is invertible.

- The rank of is .

- The rows of are linearly independent.

- The columns of are linearly independent.

The equivalence of (2), (3) and (4) follows from the definition and from

Lemma 26.3

.

For the equivalence of (1) and (2), let's consider the

linear mapping

defined by . The property that the column rank equals , is equivalent with the map being surjective, and this is, due to

Corollary 25.4

,

equivalent with the map being bijective. Because of

Lemma 25.11

,

bijectivity is equivalent with the matrix being

invertible.

- Determinants

The determinant is only defined for square matrices. For small , the determinant can be computed easily.

This follows with a simple induction directly from the recursive definition of the determinant.

- Multilinearity

We want to show that the recursively defined determinant is a "multilinear“ and "alternating“ mapping, where we identify

so a matrix is identified with the -tuple of the rows of the matrix. We consider a matrix as a tuple of columns

where the entries are row vectors of length .

Let be a field, and . Then the determinant

is multilinear. This means that for every , and for every choice of vectors , and for any , the identity

holds, and for , the identity

Proof

Let be a field, and . Then the determinant

- If in two rows are identical, then . This means that the determinant is alternating.

- If we exchange two rows in , then the determinant changes with factor .

Proof

Let be a field, and let denote an -matrix

over . Then the following statements are equivalent.- We have .

- The rows of are linearly independent.

- is invertible.

- We have .

The relation between rank, invertibility and linear independence was proven in Corollary 26.4 . Suppose now that the rows are linearly dependent. After exchanging rows, we may assume that . Then, due to Theorem 26.9 and Theorem 26.10 , we get

Now suppose that the rows are linearly independent. Then, by exchanging of rows, scaling and addition of a row to another row, we can transform the matrix successively into the identity matrix. During these manipulations, the determinant is multiplied with some factor . Since the determinant of the identity matrix is , due to

Lemma 26.8

,

the determinant of the initial matrix is .

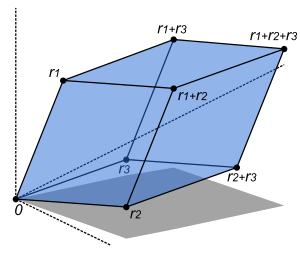

In case , the determinant is in tight relation to volumes of geometric objects. If we consider in vectors , then they span a parallelotope. This is defined by

It consists of all linear combinations of these vectors, where all the scalars belong to the unit interval. If the vectors are linearly independent, then this is a "voluminous“ body, otherwise it is an object of smaller dimension. Now the relation

holds, saying that the volume of the parallelotope is the modulus of the determinant of the matrix, consisting of the spanning vectors as columns (or rows).

- The multiplication theorem for determinants

We discuss without proofs further important theorems about the determinant. The proofs rely on a systematic account of the properties which are characteristic for the determinant, namely the properties multilinear and alternating. By these properties, together with the condition that the determinant of the identity matrix is , the determinant is already determined.

Let denote a field, and . Then for matrices , the relation

Proof

The transposed matrix arises by interchanging the role of the rows and the columns. For example, we have

Proof

This implies that we can compute the determinant also by expanding with respect to the rows, as the following statement shows.

Let be a field, and let be an -matrix over . For , let be the matrix which arises from , by leaving out the -th row and the -th column. Then (for and for every fixed and )

For , the first equation is the recursive definition of the determinant. From that statement, the case follows, due to Theorem 26.15 . By exchanging columns and rows, the statement follows in full generality, see Exercise 26.13 .

- The determinant of a linear mapping

Let

be a linear mapping from a vector space of dimension into itself. This is described by a matrix with respect to a given basis. We would like to define the determinant of the linear mapping, by the determinant of the matrix. However, we have here the problem whether this is well-defined, since a linear mapping is described by quite different matrices, with respect to different bases. But, because of Corollary 25.9 , when we have two describing matrices and , and the matrix for the change of bases, we have the relation . The multiplication theorem for determinants yields then

so that the following definition is in fact independent of the basis chosen.

Let denote a field, and let denote a -vector space of finite dimension. Let

be a linear mapping, which is described by the matrix , with respect to a basis. Then

| << | Mathematics for Applied Sciences (Osnabrück 2023-2024)/Part I | >> PDF-version of this lecture Exercise sheet for this lecture (PDF) |

|---|

![{\displaystyle {}P:={\left\{s_{1}v_{1}+\cdots +s_{n}v_{n}\mid s_{i}\in [0,1]\right\}}\,.}](https://wikimedia.org/api/rest_v1/media/math/render/svg/1d7c1533b8af048ed4fc61742f66f81d77814f0a)