Psycholinguistics/Chronometry

Introduction

[edit | edit source]The chronometric perspective examines the production of speech by examining reaction times as a means of teasing apart the various stages of speech production. This chapter explores the evolution of this chronometric approach and examines the landmark findings in a number of the larger categories of chronometric research, such as picture-word interference tasks and word frequency effects. The results of these individual studies and domains of research are also compared to the two dominant models of speech production, interactive cascading models such as Dell's two step model, and the discrete models such as Roelof's WEAVER model and their compatibility with each is explored.

Research Areas

[edit | edit source]Naming Latencies

[edit | edit source]

The seminal work in the chronometric exploration of speech production, is arguably that of James McKeen Cattell in the 1880s. Cattell discovered that naming lists of 100 object drawings took considerably longer than reading a list of words naming the same 100 objects. This was some of the earliest evidence indicating that the production of speech was not a simple, one step process.

This effect was further explored in 1967 by Steven Fraisse. He demonstrated that the effects observed by Cattell could not be explained by differences in the visual sensory differences between the drawing stimuli and the written word stimuli. This was accomplished by recording the naming latencies of the stimulus "O", when that stimulus was perceived as being a circle, the letter O, or a zero. The differences in response times were clear. When named as "circle", the mean RT was between 100ms-150ms longer than the RT induced by reading the stimulus as either "O" or "zero". These results lead to the conclusion that different coding processes occur when naming an object as opposed to reading a word.

The Stroop Paradigm

[edit | edit source]Semantic Facilitation and Semantic Interference demonstrated. Showed automaticity of word naming in comparison to color naming.

In 1935, John Stroop published what would become one of the most cited papers in cognitive psychology, in which the oft replicated Stroop Task was first employed. The three conditions of Stroop's experiment each used 2 of three sets of stimuli:

- a list of color words written in black ink ("red", "blue" etc.)

- a list of color words printed in an incongruent ink (e.g. "red" printed in blue ink)

- a list of colored squares. By comparing the time required to read through these lists

Stroop was able to make a number of observations. Firstly, when asked to name the ink color and disregard the written word while reading the second list, participants took significantly longer compared to naming the colours of simple colored squares. This was termed a semantic interference effect, as it was believed to be brought about by the related semantic information of the written color word conflicting with the color of the ink. Second, there was no significant difference in the length of time required to read the written words with varying ink colors in comparison to the time required to read through a list of color words all written in black ink. This observation was termed the Stroop asynchrony (van Maanen). Also of note was that the average time to complete a colored square naming list was significantly longer than the time required to finish a reading list, regardless of ink color. Together, these findings all reinforced the notion that word naming operates on a faster pathway than that of object (or in this case, color) naming. Further research on the topic (see Macleod, 1991 for comprehensive review) confirmed and expanded upon Stroop's original findings, and have demonstrated evidence for some degree of semantic facilitation as well. This effect occurs when the written word and the ink the word is printed in are congruous, e.g. the color blue will be named faster if the word written in the ink is "blue".

Picture/Word Interference Tasks

[edit | edit source]Based on the Stroop Task, Rosinski and colleagues (1975) created the Picture Word Interference task (PWI). Rosinski presented line drawings of common objects with distractor words superimposed on them. Large increases in latency were observed when participants were told to name the object and ignore the word, but only when the word was semantically unrelated to the object to be identified. Furthermore, this interference was not observed when the task was reversed, the drawings did not strongly interfere with the reading of the words, regardless of their semantic relationship to the word. These findings mirror those of the semantic interference demonstrated in the Stroop literature, and their widespread replication has lead many to believe that the underlying mechanism of interference for Stroop and PWI tasks is in fact the same (Macleod, 1991; van Maanen, 2009).

In 1979, Lupker was able to further refine the nature of the semantic interference effects through the implementation of a microphone to record voice onset times for each individual trial instead of recording completion times for entire lists as had been the tradition previously. Lupker's results showed that words sharing the same semantic category as the target object produce the most amount of interference, regardless of their relative association to the target object. For example, naming the target object of a mouse encountered the more interference when presented with "dog" (which is in the same semantic category of "animal") than when presented with "cheese", a word most people commonly associate with mice. The picture below provides an example of each type of pairing.

Lupker (1982) then went on to experiment with the effects orthographic and phonological elements of the distractor words have on object naming. His first experiment demonstrated that orthographic information facilitates object naming in comparison to an unrelated word, but still showed mild interference in comparison to the naming of a picture in the absence of any distractor. He also demonstrated a mild phonological facilitation effect when the distractor word and target object were phonetically identical, except for the first syllable, but almost entirely orthographically different, e.g. plane-brain. This facilitatory effect was also observable with phonologically similar, pronounceable nonwords. Similar to the orthographic effects, this phonological facilitation is only in comparison to unrelated distractor words. The picture alone still provides the fastest RT.

The next real breakthrough in chronometric research came from the variation of the SOA involved in the PWI tasks of picture naming, word reading, picture categorization, and word categorization(Glaser & Düngelhoff). They were able to distinguish 3 categories of effects from their results. Slow effects occured at SOA of -200ms to -400ms, and can be either facilitating or interfering, depending on the task and the relationship between distractor and target. Fast effects occur at ±100ms, and are always in the form of interference, and are exemplified in the classic Stroop task. Finally, they also observed some retroactive effects where distractor stimuli presented up to 300ms after the target stimuli caused interference in the response to the target. These SOA/RT curves indicated that there was a functional similarity between word reading and picture categorization, as well as between word categorization and picture naming. Another important finding comes from their comparison of picture categorization and picture naming. The dramatic reversal of inhibition clearly indicates that there is some difference between the internal processing of verbal and nonverbal stimuli.

1990 saw the advent of another paradigm shift in PWI tasks by presenting the distractor word as an auditory stimulus. The results were in kind with those of more standard PWI tasks, indicating that the paradigm was valid. Additionally, they compared the time course of effects from semantic and phonological distractors. There was a clear difference in both the timing and nature of these effects. Semantic distractors created the typical interference demonstrated in other studies, but only if it was presented with a SOA of -150ms. Phonological distractors led to varying degrees of facilitation. At the SOA= 0ms condition, phonological facilitation was relative to the unrelated condition, while in the +150ms SOA condition, the facilitation was apparent even in comparison with the silence (picture alone) condition. Due to the temporal distinction of these effects, the results have been used to support the theory that the process of word production involves two discrete steps, semantic activation, followed by phonological activation. Additionally, a distinction was identified between categorical processing and semantic processing in that semantic distractor words had no impact on an image categorizing task.

Implicit Priming

[edit | edit source]Implicit priming is another experimental method that has proven illuminating to the field of speech production. While much work has been done on the nature and time course of semantic processing in word production, phonological research had been somewhat lacking. Experiments by Meyer and several colleagues (1990, 1991, 1997) were able to cast some light on the nature of phonological and metrical processing using the implicit priming paradigm. In these explorations, participants are presented with several trigger-response word pairs to memorize, e.g. signal-beacon, glass-beaker. Once the word pairs have been familiarized, one of the trigger words is presented to the participant, and the time taken to name its response word is recorded. If all of the response words have the same initial syllable (e.g. beacon, beaver, basin) a priming effect is obtained. If the initial syllables vary, such priming does not occur. This indicates that phonological information proceeds in a segmental, left-to-right fashion. If participants know that all possible responses begin with the same syllable, this syllable can be prepared in advance and thus response time is reduced.

In addition to uncovering this phonological data, the implicit priming paradigm has also provided some evidence for the existence of metrical frames. Roelofs and Meyer (1998) explain these metrical frames as a mental concept that contains information on the word's number of syllables and stress pattern. Using implicit priming techniques as described above, they observed that when the response words all have the same stress pattern and same number of syllables, a metrical priming effect occurs. However, there were a number of interesting interactions that led to inferences about the nature of phonological and metrical priming. Firstly, the phonological priming effect can be blocked if the metrical structures of the words do not also match. The lack of priming for words that differ only in their number of syllables suggests that the metrical frame is not produced segmentally in the same manner as the phonological information. If that were indeed the case, the number or type of syllables after the initial shared segments would not matter. Furthermore, for word lists in which each word has the same number of syllables and an identical stress pattern, metrical priming is not observed if the initial syllables do not match. Taken together, these findings indicate that the metrical and phonological retrieval processes are separate, parallel processes. If both processes must be completed before the next step of speech production can begin (Roelofs & Meyer, 1998), this explains why they are always observed in tandem; speeding up one and not the other will not produce a faster overall result. While it explains this data quite well, at least one major issue arises from this conclusion. If left-to-right segmental process must be completed before speech output can begin, it stands to reason that words of greater length would take longer to process, and should therefore show larger reaction times. However, research into this area has shown that such length induced latencies are elusive if not non-existent ( Bachoud-Lévi et al., 1998). This suggests that articulation must be able to begin before phonological processing is entirely complete, but the exact conditions which must be met for articulation to begin are not yet known.

Word Frequency Effects

[edit | edit source]Oldfield and Wingfield first noticed in 1965 that response times to images of objects with common word names were faster than those with uncommon names. Further research (e.g. Wingfield, 1968; Jescheniak & Levelt, 1994) was able to determine that this effect is not due to varying speeds of visual stimulus processing, but is based instead at the point of response selection, i.e. the selection and production of the word corresponding to the image. As described above, there are multiple steps involved in the selection and production of a word, and the task became to localize the word frequency effect to one or more of these stages.

Jescheniak and Levelt (1994) ran a series of experiments attempting to do just that. No frequency effect differences in reaction time were found in connection to tasks of categorization, articulation, or the lemma level (measured here through a syntactic based gender identification with Dutch words). A significant effect was found in the phonological processing of words, tested by examining the reaction times to homophone words to low frequency and high frequency controls, which were also compared to each other to ensure the validity of the experiment. Words on the high frequency control list were chosen that matched the combined frequency of all possible readings of words on the homophone list, while the low frequency control words were matched in frequency with the less common reading of words on the homophone list. Reaction times were the highest for the low frequency controls, with high frequency controls and homophones much lower and not reliably distinguishable. This is strong evidence to indicate that it is the frequency of phonological information in lexeme activation that is behind the word frequency effect, and not the frequency of lemma activation.

Chronometry and Speech Production Models

[edit | edit source]Interactive Models

[edit | edit source]

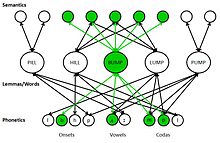

These models consist of a vast network of nodes categorized into one of three basic types, semantic nodes, word (or lemma) nodes and phonological nodes. These nodes are all interconnected, and function on the principle of spreading activation (Levelt, 1999). In speech production, first the intended semantic nodes are activated. These nodes contain the meaning or concept that is eventually going to be conveyed. Those semantic nodes will then in turn activate appropriate lemma nodes that contain the words best capable of conveying the intended concepts. Those lemma nodes then in turn activate the phonological codes that make up the words. Because the nodes are all vastly interconnected, the activation does not proceed linearly. The activation of the desired concepts will in turn activate other concepts that are related to those concepts, which will then in turn activate all of the lemma nodes associated with those newly activated concepts, which results in the activation of the phonological nodes connected to each of those lemma nodes as well. Because of this widespread activation, word choice in this model depends not on which nodes are activated, but on how much those nodes are activated.

The bidirectional nature of these models makes them appealing, as they could potentially provide a unified theory behind both speech perception and production; the reading or hearing of a word would activate the phonological nodes, starting the chain reaction in the reverse direction. It also handily explains a number of findings from speech error research and the lexical bias effect (Levelt, 1999). The semantic interference effect repeatedly demonstrated by chronometric researchers (e.g. Rosinski, 1975; Lupker, 1979; Macleod, 1991) can also be somewhat accounted for by these models. By presenting a semantically related distractor, the nodes neighboring the target lemma node already partially activated due to the spreading activation pattern will recieve a boost in activation from the distractor. This boost in activation will make the distractor appear to be a more valid possibility for selection and will require the use of more conscious effort to rule it out, which requires time and would result in larger latencies. If the boost were sufficient enough to surpass the level of activation of the target, a speech error may occur.

However, there are some chronometric results not easily reconciled with the interactive models. Experiments which have found different interference effects by varying SOA (e.g. Glaser & Dugelhoff 1984, Schriefers, Meyer, & Levelt, 1990) are particularly damaging to interactive models as they demonstrate specific windows of semantic and phonological activation and processing. Due to the bi-directionality and spreading activation of the interactive models, such distinct phases are at the least, unlikely. It is certainly possible that such conditions could be met with the proper tweaking of internal factors of the interactive models (such as activation duration, rate of spread, etc.) but the process is somewhat inelegant.

Discrete Process Models

[edit | edit source]

Along with interactive models, the other dominant theoretical perspective is that of discrete process models. These models are built on a spreading activation network similar to that governing the interactive models, but there are constraints place on the direction and extent to which activation can spread. A popular discrete process model is the WEAVER model proposed by Roelofs in 1997. In WEAVER, activation spreads between concept nodes, and from concept nodes to lemma nodes, as well as from lemma nodes back to concept nodes. However, activation does not spread to the morpho-phonological nodes associated with specific lemmas until that lemma is selected. The morpho-phonological codes also do not feed back into the lemma nodes. This model can more readily accommodate the data from the varied SOA studies, as it dictates that there are very distinct stages for semantic and phonological processing that do not overlap. As it was built based largely on the results of chronometric data, WEAVER can account for the majority of effects typically observed by chronometric studies, including the semantic interference effect, phonological facilitation effects, picture categorizing effects, and object naming latencies (Indefrey & Levelt, 2004). However, just as the interactive models have difficulty incorporating chronometric data, the WEAVER model has difficulty with some speech error data. It primarily relies on the use of an external (from the model) "monitor" and its occasional failure to detect phonological encoding errors to account for observed speech errors (Levelt, 1999). This "monitor" is thought to be more likely to miss errors that produce actual words, hence the lexical bias effect, and more likely still to miss errors that produce actual words that are semantically related to the target word, hence the existence of mixed errors.

Neuroimaging Research and the Time Course of Speech Production

[edit | edit source]A meta analysis of 82 neuroimaging experiments (Indefrey & Levelt, 2004) has allowed for the creation of relatively solid timeline of speech production. The process has been divided into three major steps, concept activation, lexical selection, and form encoding.

By looking at results of studies involving picture categorization and picture word matching, an estimate of the time reqired for concept activation can be obtained. Thorpe and colleagues (1996) used ERP measures in a go/no-go response experiment involving picture categorization, and found that the ERPs began to differ in the go vs no-go conditions at approximately 150ms after the presentation of the image. This suggests that the image had been categorized and a decision had been made by the 150ms point, providing an upper limit estimate for the time required for concept activation. The meta analysis of similar ERP studies using syntactic (lemma based) attributes to determine the go-no go response indicated that lemma activation occurs takes approximately 75ms, and occurs immediately following concept activation. The final stage, form encoding, involves three substages, phonetic code retrieval, syllabification, and phonetic encoding. These stages appear to occur sequentially, and the compiled data in Indefrey and Levelt's (2004) meta analysis indicates that the durations for these stages are approximately 80ms, 125ms, and 145ms respectively. Altogether, these average stepwise findings sum to an average total processing time of 600ms, which is in keeping with the empirical data from most chronometric experiments.

Conclusion

[edit | edit source]Chronometric explorations of speech production have provided a procedural focused window into the process of speech production. Over time, and with the advent of new technologies the results have only improved. From Cattell's early discoveries in object naming discrepancies to the much more high-tech brain imaging studies, the underlying concepts have remained largely the same, and the information acquired has built better and more comprehensive models, such as WEAVER. In essence, the primary findings of chronometric studies indicate that speech production occurs in a sequential manner. First, the desired semantic concepts are activated, which then activate the specific words connected to those concepts. The desired word is then selected, phonologically encoded, and articulated.

Further research will help to further identify the timing and duration of each of these steps, and through the use of more complex imaging techniques, may continue to localize the exact locations in the brain where each of these steps occurs.

Learning Module 1

[edit | edit source]The following are various examples of the Stroop Paradigm. With a partner, use a stopwatch to record the time it takes to read each list of words according to the instructions given.

List 1

[edit | edit source]Read through the entire list, starting at the top left-hand word and reading down the columns. Have your partner record your time for the entire list and follow your progress. If the reader makes an error, the timer states "try again" and the reader must correct the error before moving on. When you finish, switch roles with your partner.

List 2

[edit | edit source]Read through the entire list, starting at the top left-hand corner and reading down the columns. Have your partner record your time for the entire list and follow your progress. If the reader makes an error, the timer states "try again" and the reader must correct the error before moving on. When you finish, switch roles with your partner.

List 3

[edit | edit source]Read through the entire list, starting at the top left-hand word and reading down the columns. Ignore the color of the letters and read the words spelled by the letters. Have your partner record your time for the entire list and follow your progress. If the reader makes an error, the timer states "try again" and the reader must correct the error before moving on. When you finish, switch roles with your partner.

Next, read through the list a second time, this time ignoring the words spelled by the letters, and simply naming the colors of the letters. Have your partner record your time for the entire list and follow your progress. If the reader makes an error, the timer states "try again" and the reader must correct the error before moving on. When you finish, switch roles with your partner.

List 4

[edit | edit source]Read through the entire list, starting at the top left-hand word and reading down the columns. Ignore the color of the letters and read the words spelled by the letters. Have your partner record your time for the entire list and follow your progress. If the reader makes an error, the timer states "try again" and the reader must correct the error before moving on. When you finish, switch roles with your partner.

Next, read through the list a second time, this time ignoring the words spelled by the letters, and simply naming the colors of the letters. Have your partner record your time for the entire list and follow your progress. If the reader makes an error, the timer states "try again" and the reader must correct the error before moving on. When you finish, switch roles with your partner.

Questions

[edit | edit source]- What purpose does the first list serve in this experiment and why is it important?

- What reading was the fastest? The slowest? Why?

- Was there any observable difference between your times on List 1 and List 2? Why might this be the case?

- How is the last list different from the others? What does this change represent, and what added perspective does it lend?

- What are some potential confounds of this mini-experiment? How could you better access the constructs this experiment targets? What aspects of this paradigm might you change? Keep the same?

- How does this "whole list time" method compare to "Voice Onset Asynchrony" methodologies? What are potential strengths and weaknesses of each method?

- How do your responses to the previous question impact the findings of the data you've collected? Do any of them generalize to the research mentioned in the chapter? If so, how could this have potentially impacted our understanding of speech production?

- The use of neural imaging studies in the study of speech production creates a window into the online processing of speech production not previously available. How do you project this will impact the future of research into speech production? Given the necessary resources, what tests would you most want to perform with these new technologies?

Learning Module 2

[edit | edit source]Using the research presented here as a jumping off point, find support for either the WEAVER model or Dell's networking model and prepare a persuasive presentation to support their model of choice. This list is not meant to be exhaustive, if you find another model you believe is more accurate, by all means prepare a presentation on it instead.

You should consider studies from various methodologies, schools of thought, languages, and investigators when preparing your arguments. For example, a good place to start might be the "Speech Errors" chapter of this course.

The presentations will largely follow the format of Pecha Kucha, in that each argument will be given 20 slides that advance automatically. Unlike the original Pecha Kucha format, the time limit for each slide for this assignment will be 30 seconds in order to allow for the additional explanation time required for some complex design protocols.

Completed arguments should be recorded and posted as video files to the Discussion Page of this chapter so that they can be reviewed and critiqued by the community and by those who have researched and presented the alternate model.

More information on Pecha Kucha can be found here.

References

[edit | edit source]- Bachoud-Levi, A-C., Dupoux, E., Cohen, L., Mehler, J. (1998). Where Is the Length Effect? A Cross-Linguistic Study of Speech Production. Journal of Memory and Language, 39(3), 331-346, ISSN DOI: 10.1006/jmla.1998.2572.

- Cattell, J.M. (1886) The Time it Takes to See and Name Objects, Mind, 11( 41), pp. 63-65

- Fraisse, P. (1967) Latency of different verbal responses to the same stimulus. The Quarterly Journal of Experimental Psychology, 19(4), 353 — 355 doi: 10.1080/14640746708400115

- Glaser, W.R., & Dungelhoff,F-J. (1984). The time course of picture-word interference. Journal of Experimental Psychology: Human Perception and Performance, 10(5), 640-654, DOI: 10.1037/0096-1523.10.5.640.

- Indefrey, P., Levelt, W. J. M. (2004) The spatial and temporal signatures of word production components. Cognition, 92(1-2), 101-144, DOI: 10.1016/j.cognition.2002.06.001.

- Jescheniak, J. D., & Levelt, W. M. (1994). Word frequency effects in speech production: Retrieval of syntactic information and of phonological form. Journal of Experimental Psychology: Learning, Memory, and Cognition, 20(4), 824-843. doi:10.1037/0278-7393.20.4.824

- Levelt, W. J. M. (1999). Models of word production. Trends in Cognitive Sciences, 3(6), 223-232. DOI: 10.1016/S1364-6613(99)01319-4.

- Lupker, S.J. (1979) The semantic nature of response competition in the picture–word interference task. Mem. Cognit. 7, 485–495. 10.3758/BF03198265

- Lupker, S. J. (1982). The role of phonetic and orthographic similarity in picture–word interference. Canadian Journal of Psychology, 36, 349–367.

- MacLeod, C. M. (1991). Half a century of research on the Stroop effect: An integrative review. Psychological Bulletin, 109(2), 163-203. doi:10.1037/0033-2909.109.2.163

- Potter, M.C., So, K-F, Von Eckardt, B., Feldman, L.B. (1984) Lexical and conceptual representation in beginning and proficient bilinguals. Journal of Verbal Learning and Verbal Behavior, 23(1), 23-38, DOI: 10.1016/S0022-5371(84)90489-4.

- Roelofs, A. (1997). The WEAVER model of word-form encoding in speech production. Cognition, 64(3),249-284, DOI: 10.1016/S0010-0277(97)00027-9

- Roelofs, A. Meyer, A.S. (1998). Metrical Structure in Planning the Production of Spoken Words. Journal of Experimental Psychology: Learning, Memory, and Cognition, 24(4), 922-939. http://www.sciencedirect.com/science/article/B6X09-46NXHVW-7/2/f53aaa284c643cd9af1eeed33b7d383a

- Rosinski, R.R., Golinkoff, R.M., & Kukish, K.S. (1975). Automatic Semantic Processing in a Picture-Word Interference Task. Child Development, 46(1), 247-253 http://www.jstor.org/stable/1128859

- Schriefers, H., Meyer, A. S., & Levelt, W. J. (1990). Exploring the time course of lexical access in language production: Picture-word interference studies. Journal of Memory and Language, 29(1), 86-102. doi:10.1016/0749-596X(90)90011-N

- Stroop, J. R. (1935). Studies of interference in serial verbal reactions. Journal of Experimental Psychology, IS, 643-662

- Thorpe, S., Fize, D., & Marlot, C. (1996). Speed of processing in the human visual system. Nature, 381, 520–522.

- van Maanen, L., van Rijn, H., & Borst, J.P. (2009). Stroop and picture-word interference are two sides of the same coin. Psychon Bull Rev, 16 (6): 987–99.doi:10.3758/PBR.16.6.987. PMID 19966248.

- Wingfield, A. (1968). Effects of frequency on identification and naming of objects. American Journal of Psychology, 81, 226-234.